My Role

Designing an AI agent that meets lenders where they work

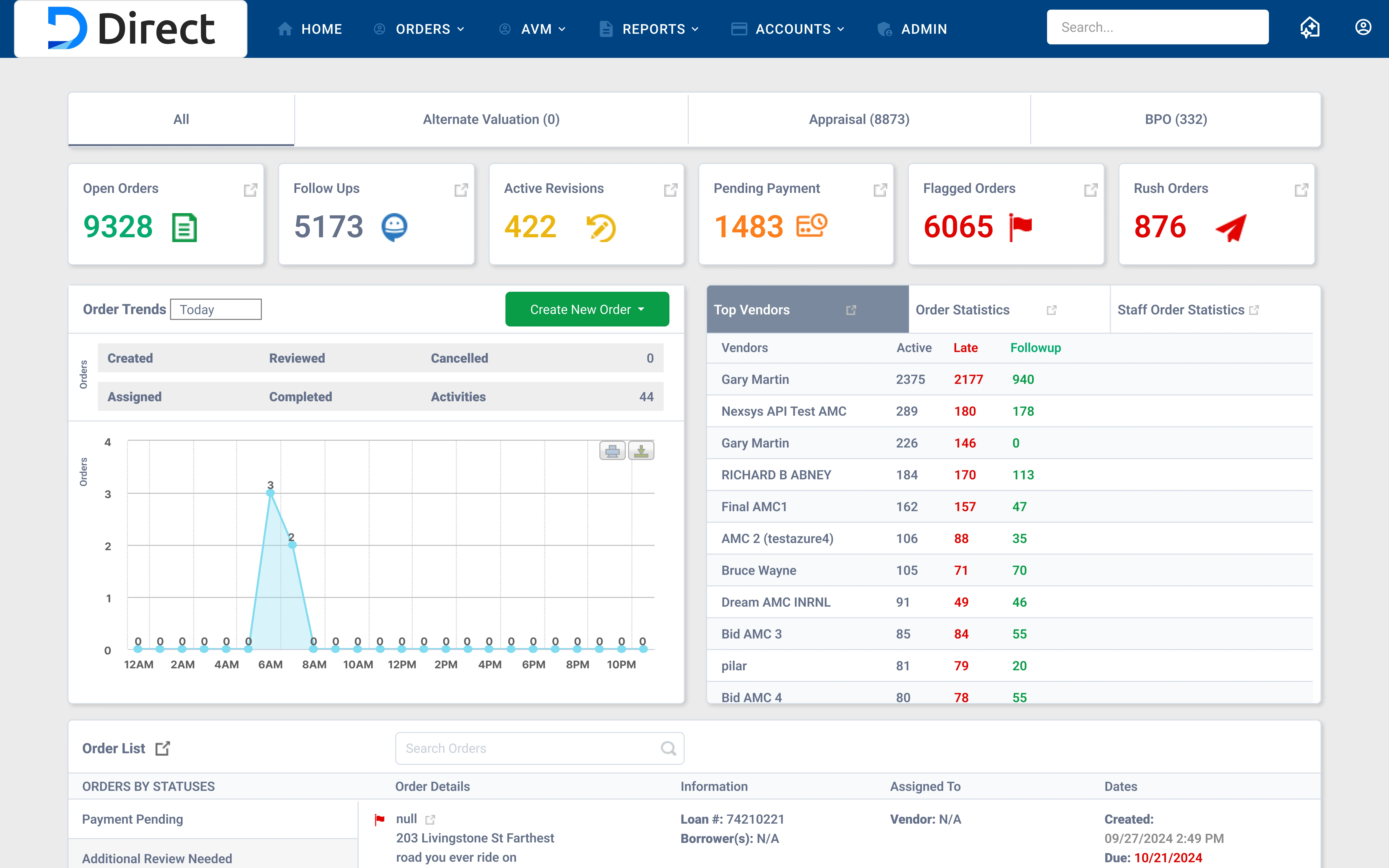

Led end-to-end design for an AI agent embedded throughout Direct, ValueLink's platform for appraisal management lenders, featuring a contextual chat interface and recommended actions. I worked across research, concept design, and detailed UX, translating complex order management workflows into an intelligent experience that guides lenders with the right information and next steps at the right moment within their workflow.

Business Goals

- Grow revenue by 30%.

- Ship AI feature to improve some aspect of client order workflow.

- Reduce customer support overhead.

Research

Forming hypothesis and finding pain points

I kept a close eye on Microsoft Clarity to note user behavior and form a hypothesis, I also led user interviews to identify friction points, validate assumptions, and define what lenders actually need from an AI assistant in their daily workflow.

User Behavior

- Opened multiple tabs to cross-reference order details and deadlines.

- Frequently contacted PMs or internal teams to ask for status updates already visible in the system.

- Relied on memory and personal notes to track which orders needed follow-up.

User Pain Points

- High order volume makes it difficult to prioritize what needs immediate attention.

- Tracking tasks manually across different orders creates mental overhead.

- Reviewing order communication requires opening each order individually, slowing down operations.

- Keeping up with ongoing changes across orders requires constant monitoring.

Scope Pushback

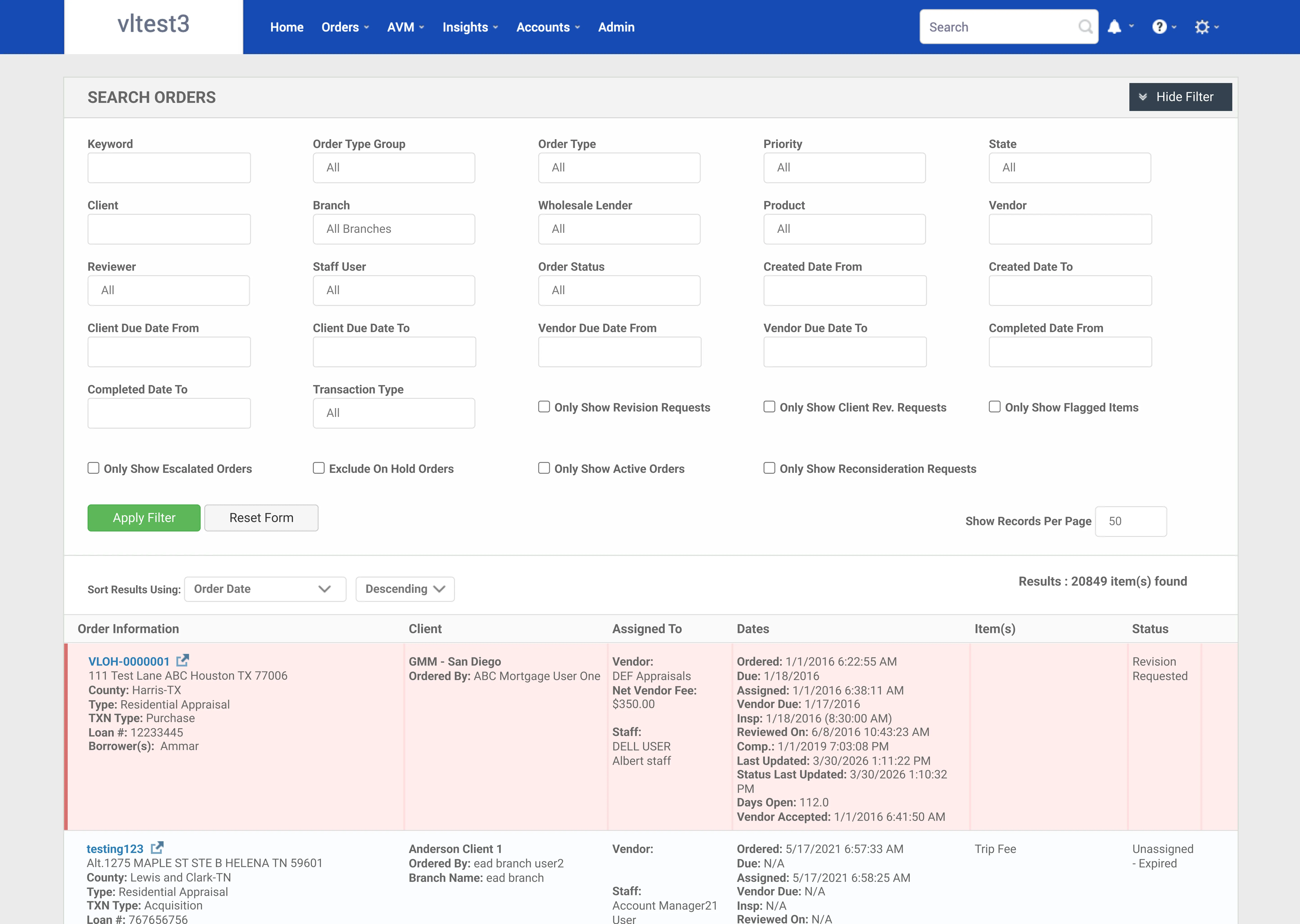

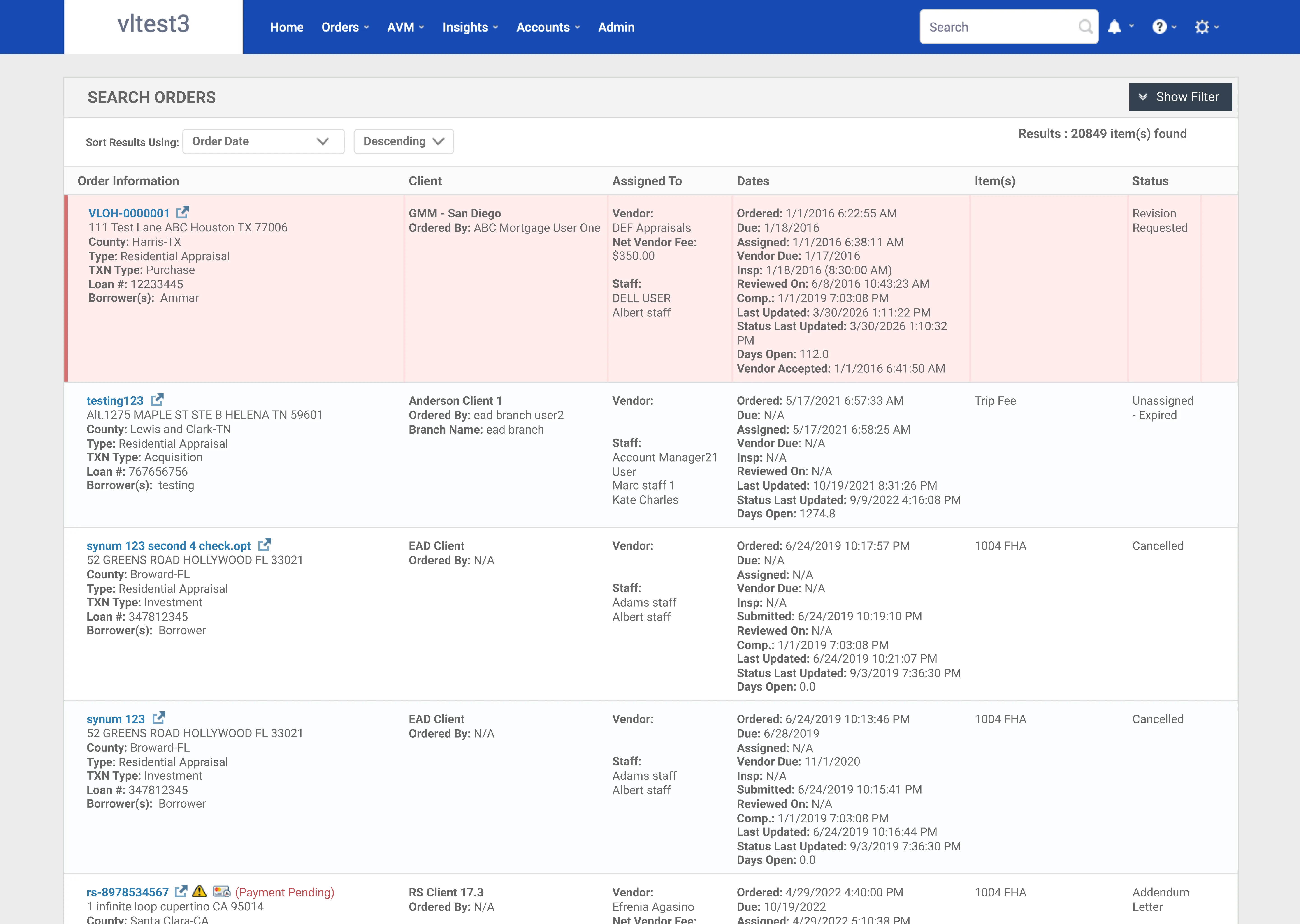

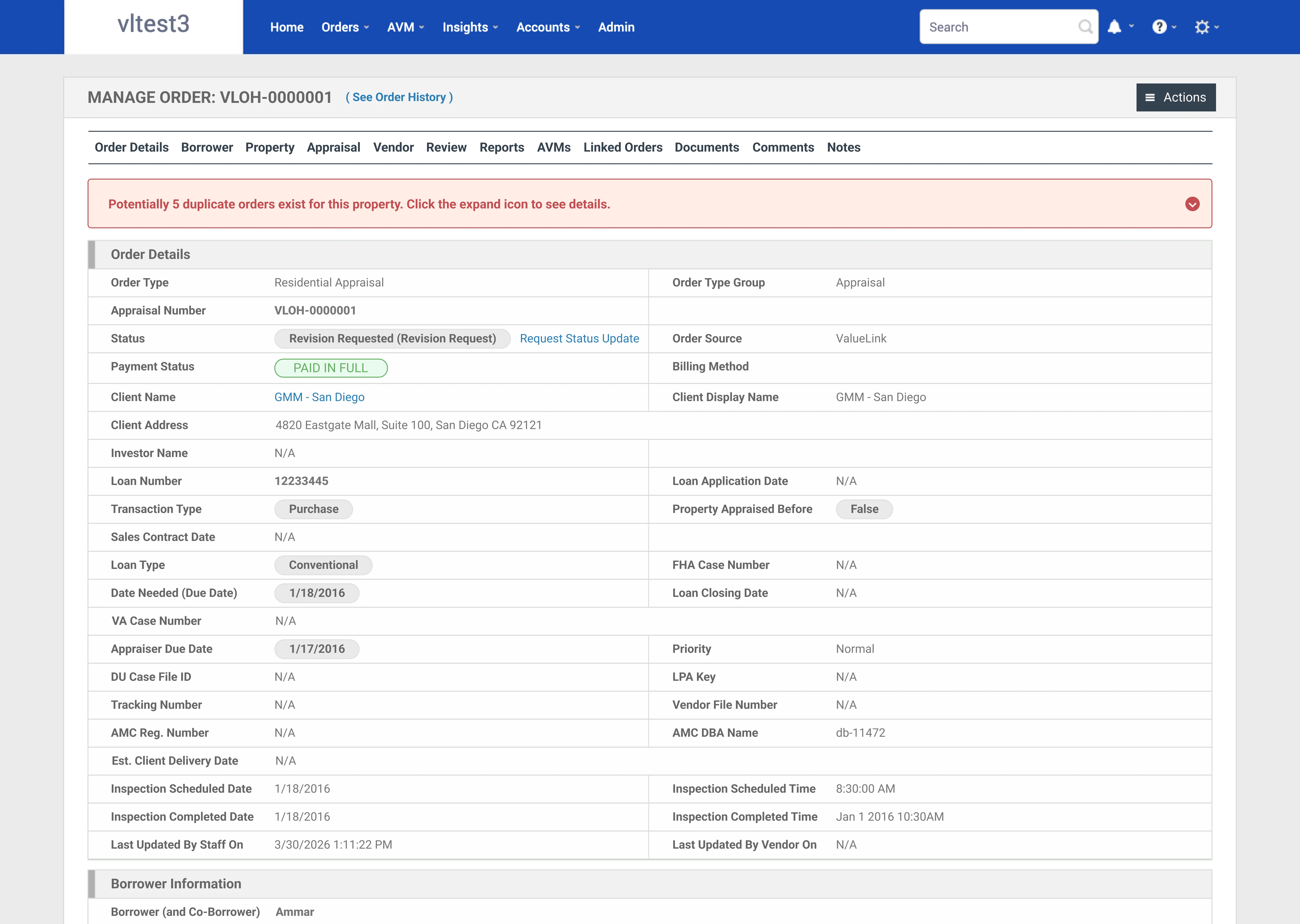

Pushing back with upper management on the scope of the agent

Initially, upper management wanted the agent to summarize order details and display them in an AI box at the top of the order details page that displays main bullets. I pushed back. Reducing the agent to a summary tool would not solve real user problems or help us meet our business goals. I built a quick prototype to show in our meetings so stakeholders could see exactly what that limited version would look like and why it fell short.

Why this does not work

The users we are designing for are already overwhelmed. They are opening multiple tabs to cross-reference orders and deadlines, contacting internal teams for status updates that are already in the system, and relying on personal notes to track follow-ups. A summary box does nothing to fix that. I argued that the agent needed to work across the workflow and not be limited to order details only. Stakeholders aligned, and we moved forward with a broader scope.

Design

Design Inspiration

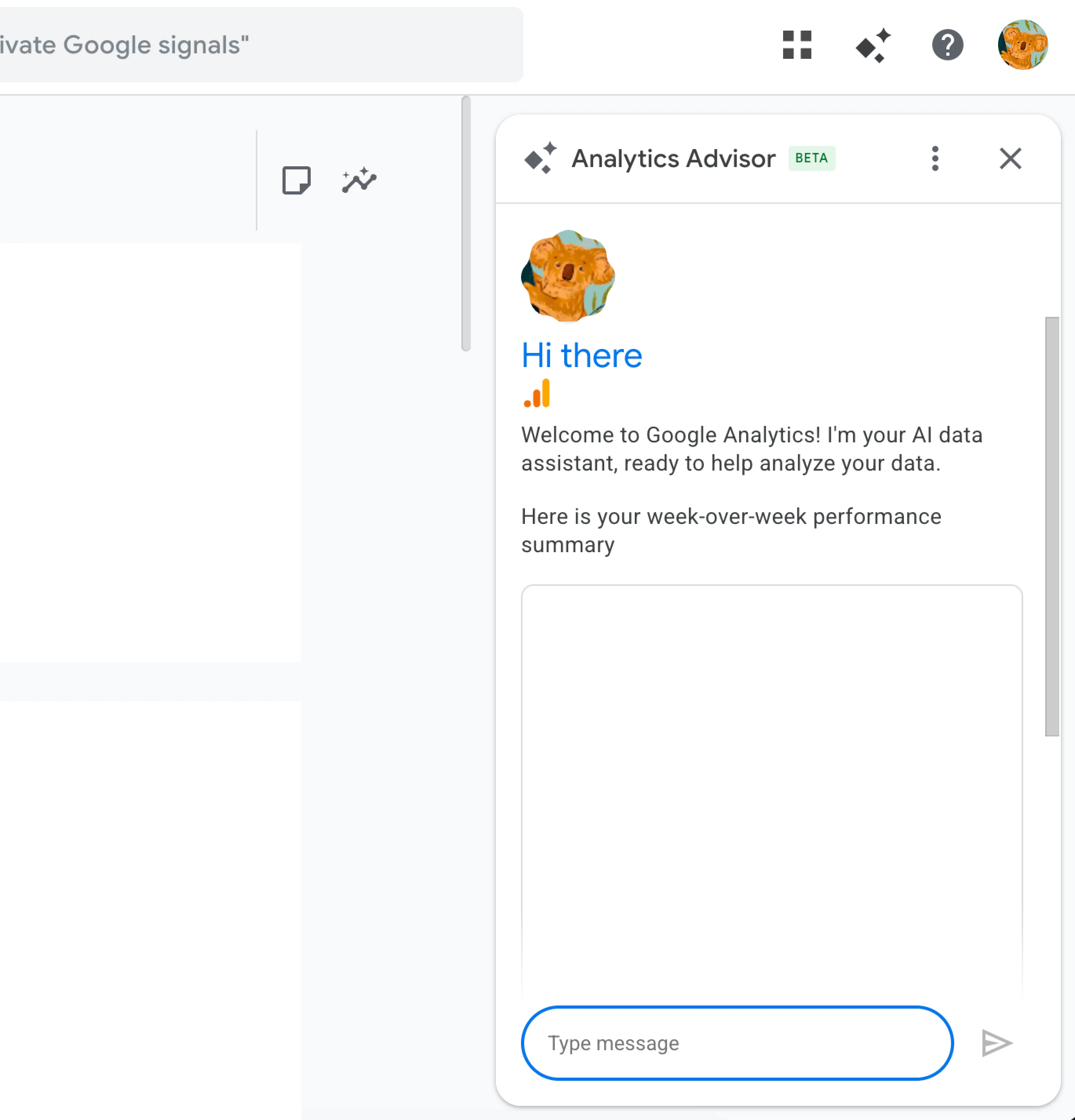

I explored how AI agents work in different platforms.

Google Analytics

Claude Plugin

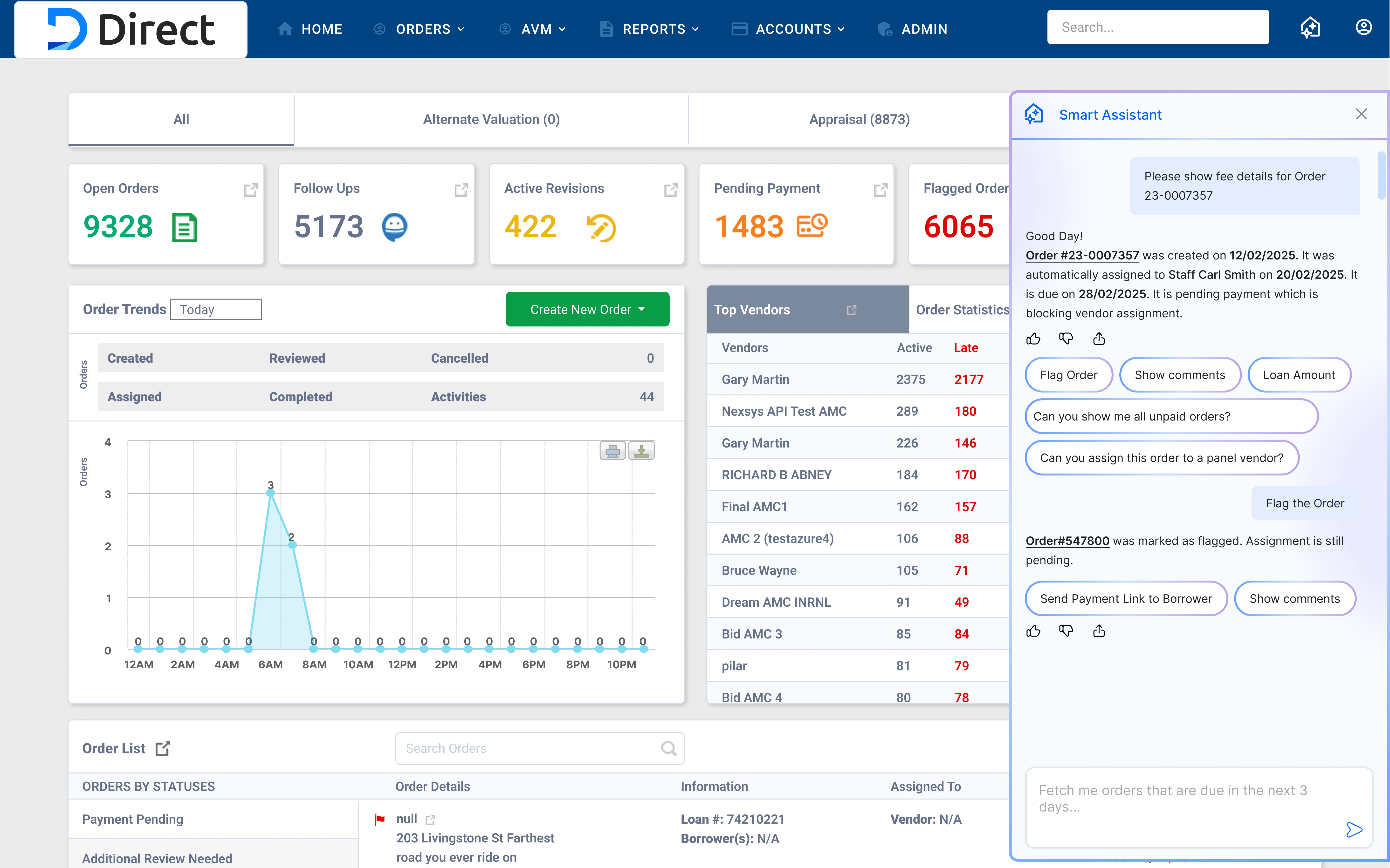

Rejected Design Approach

This approach obstructed any content behind it hence I did not go forward with it.

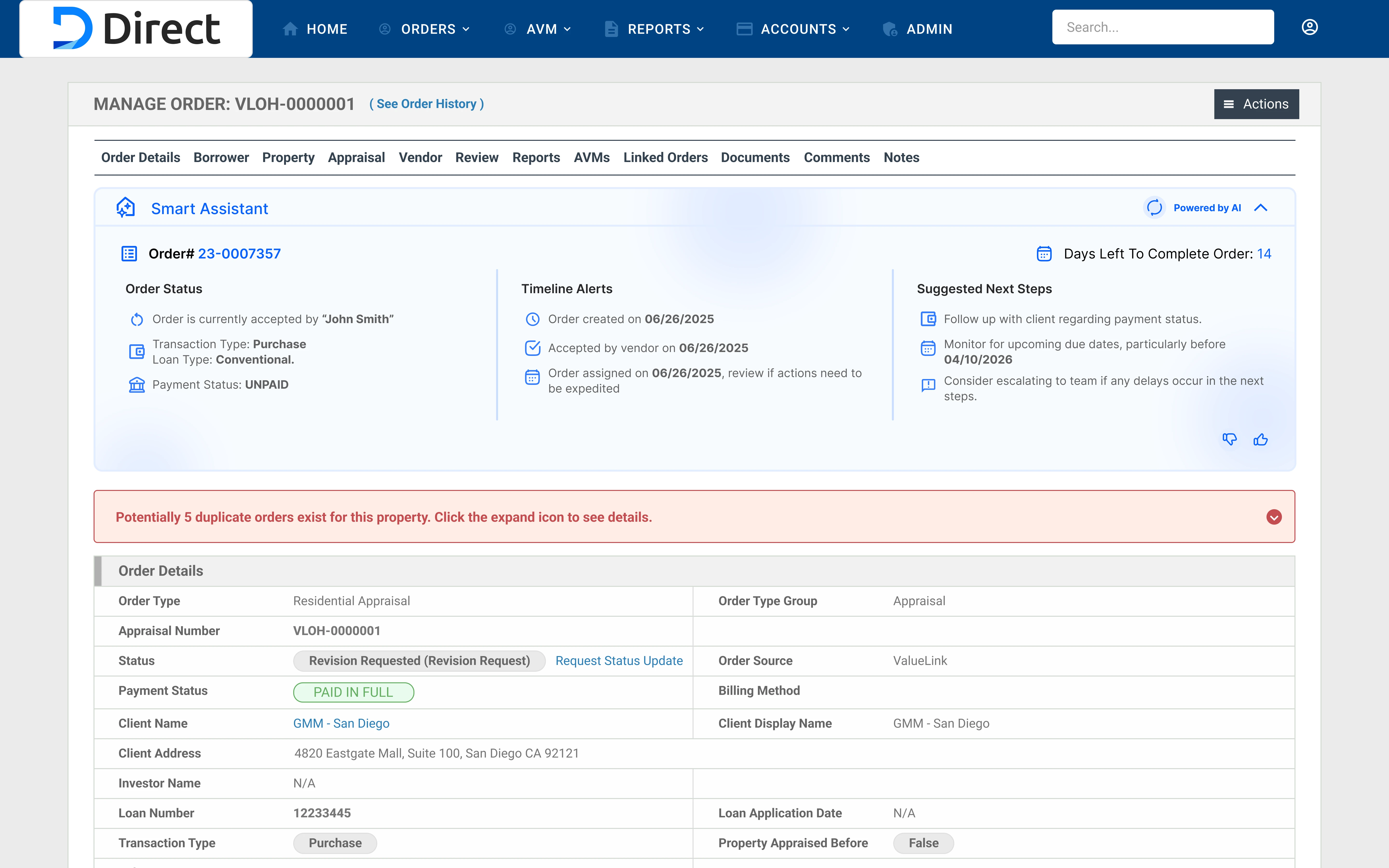

Accepted Design Approach

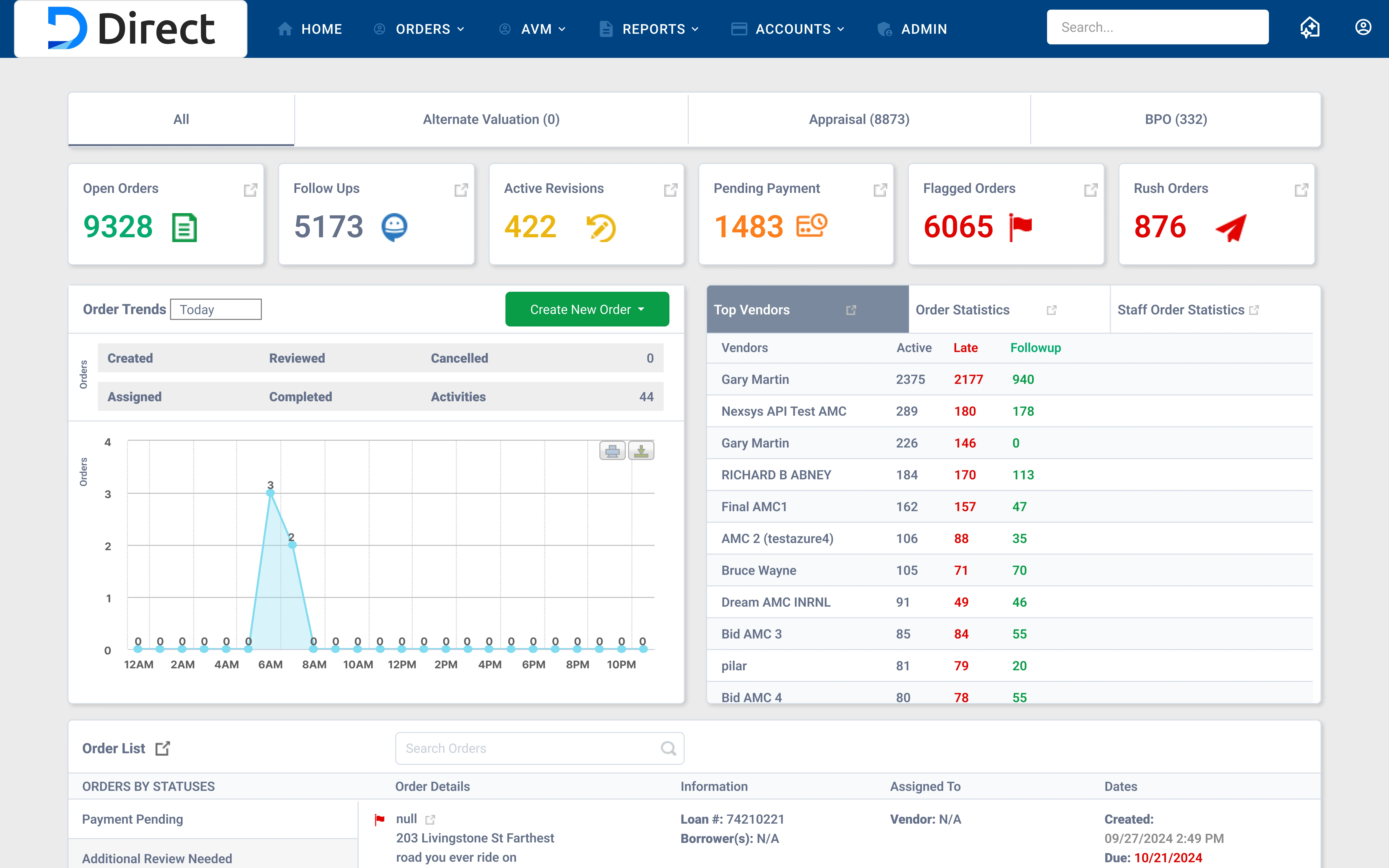

Every page in Direct is made to have its content fit within a centered container. The consistent grid structure means any page can compress horizontally to make room for a side panel without breaking the experience. Nothing gets hidden or overlapped. The content simply adjusts within its container and the agent lives alongside it.

Garnering user trust with Conversational AI Agent

AI Hallucination Problem

The assistant may misinterpret industry-specific terms and generate confident but incorrect insights, leading to confusion and poor decisions.

Solution

I reviewed conversations twice a week to refine understanding and improve response accuracy over time.

Design

Final Designs

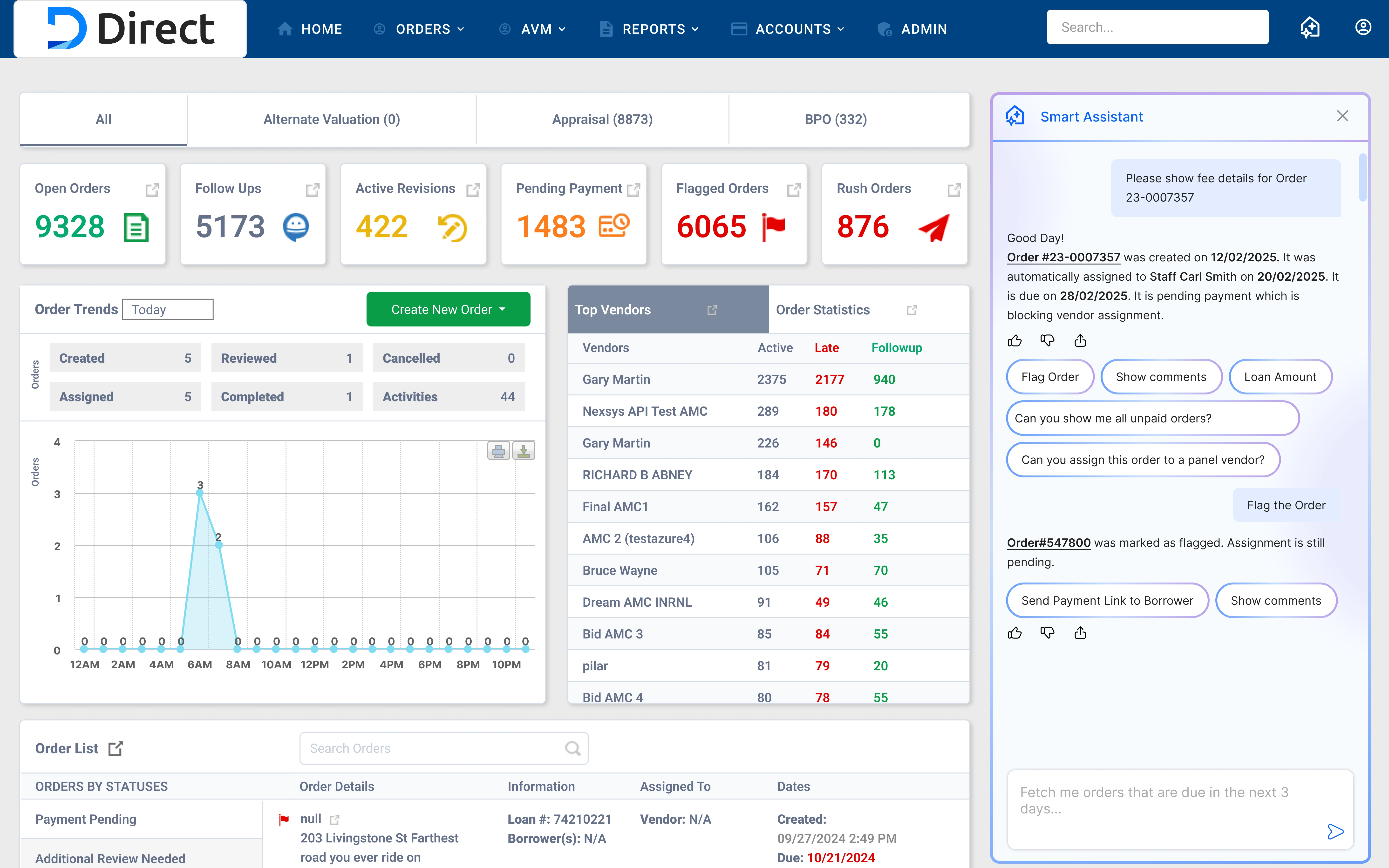

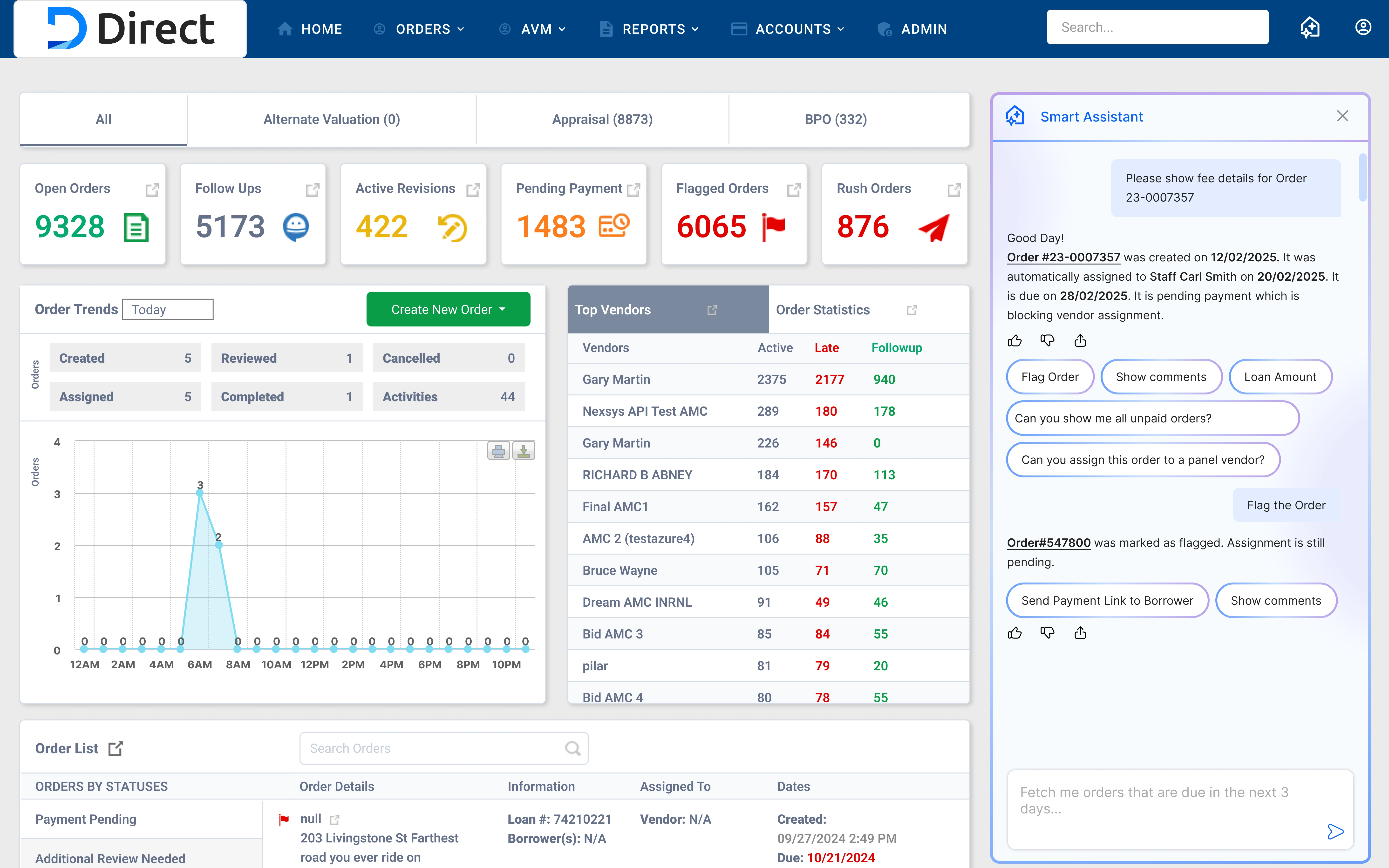

AI Agent for Managing Orders in Direct.

I did not design the Direct dashboard

I designed the AI agent which opens when user clicks on the icon in top menu

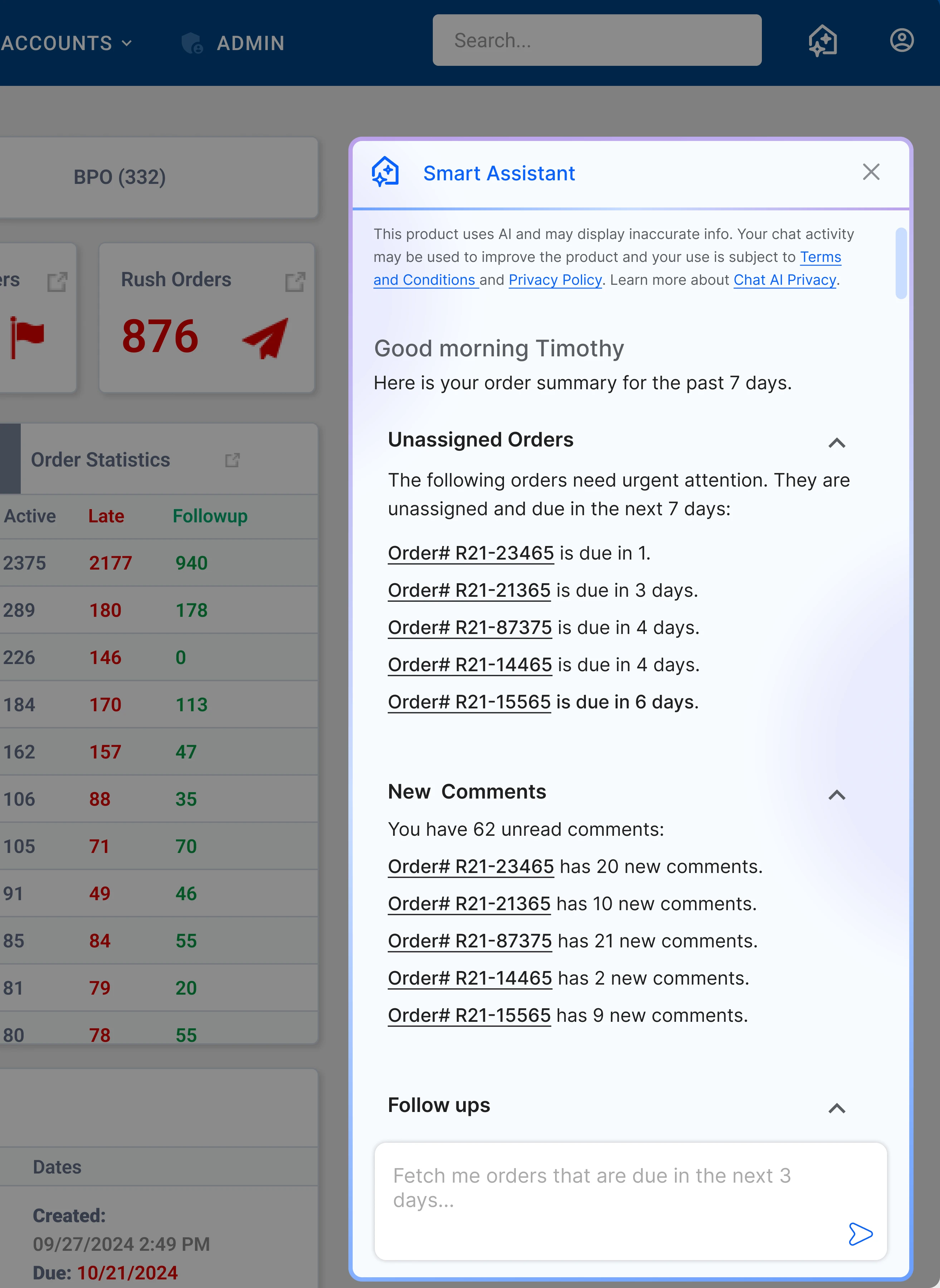

Welcome screen shows an overview of orders from the last 7 days

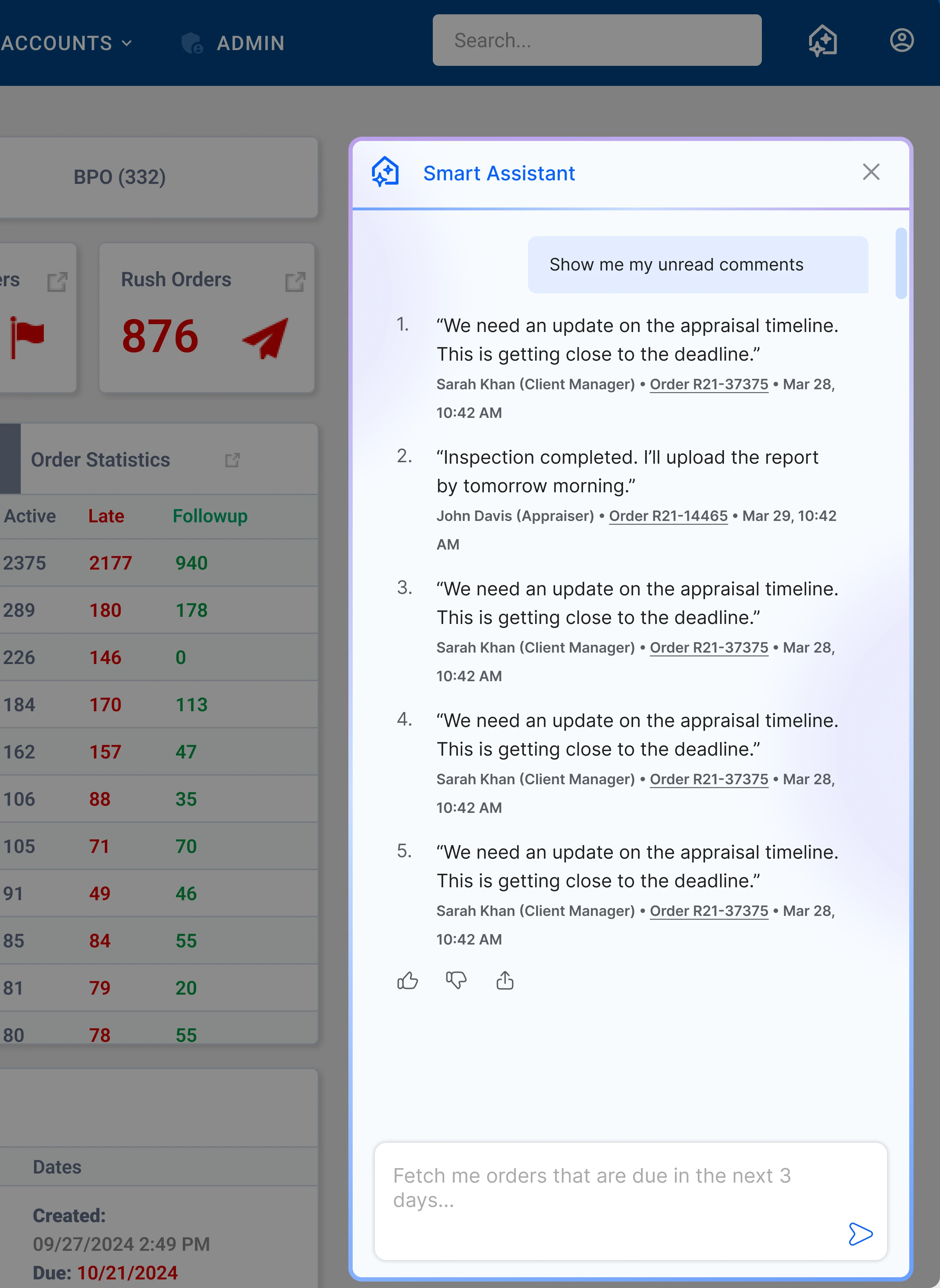

Example of user requesting to see unread comments

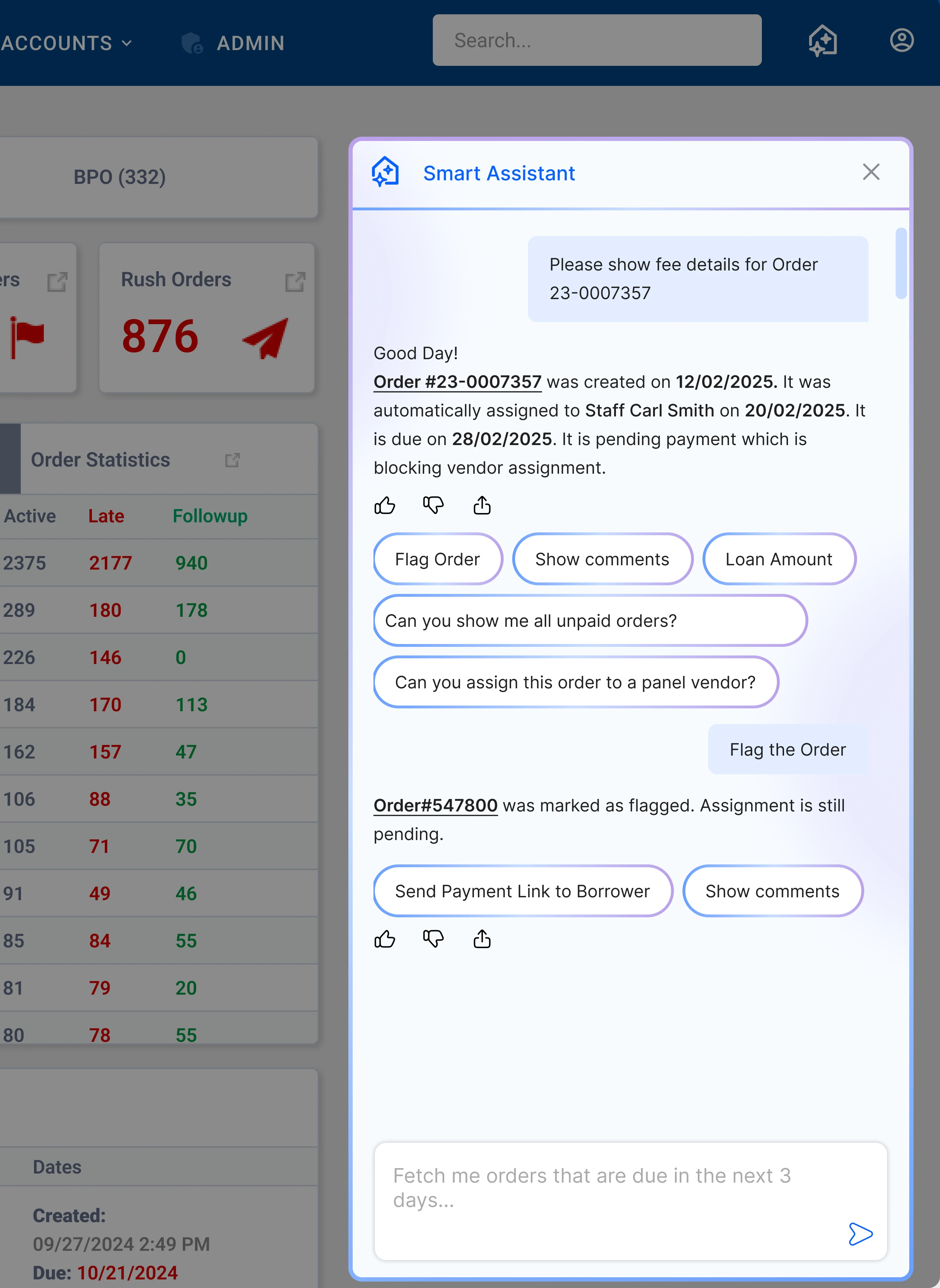

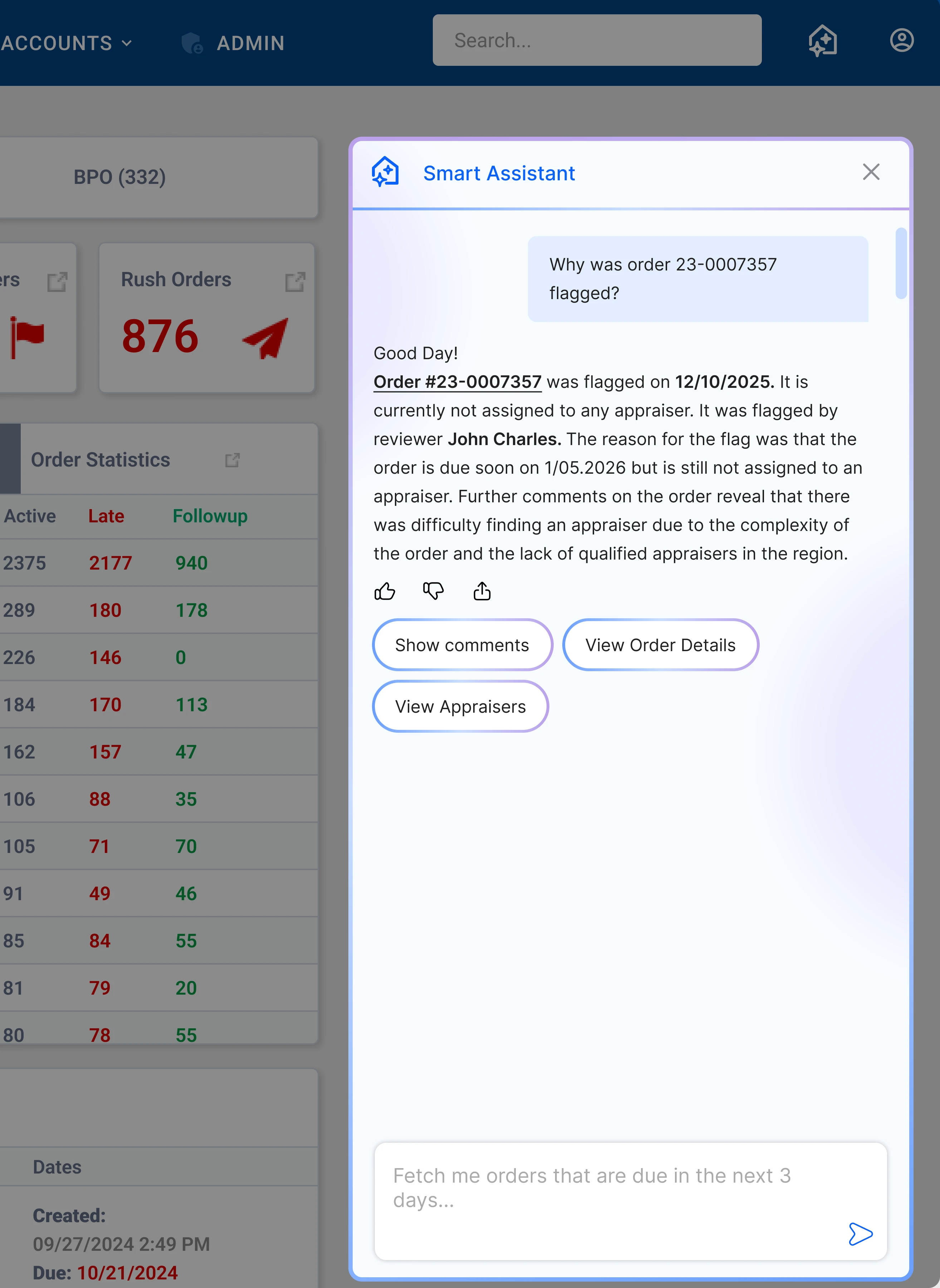

User can also ask specific questions about their orders or select from suggestions

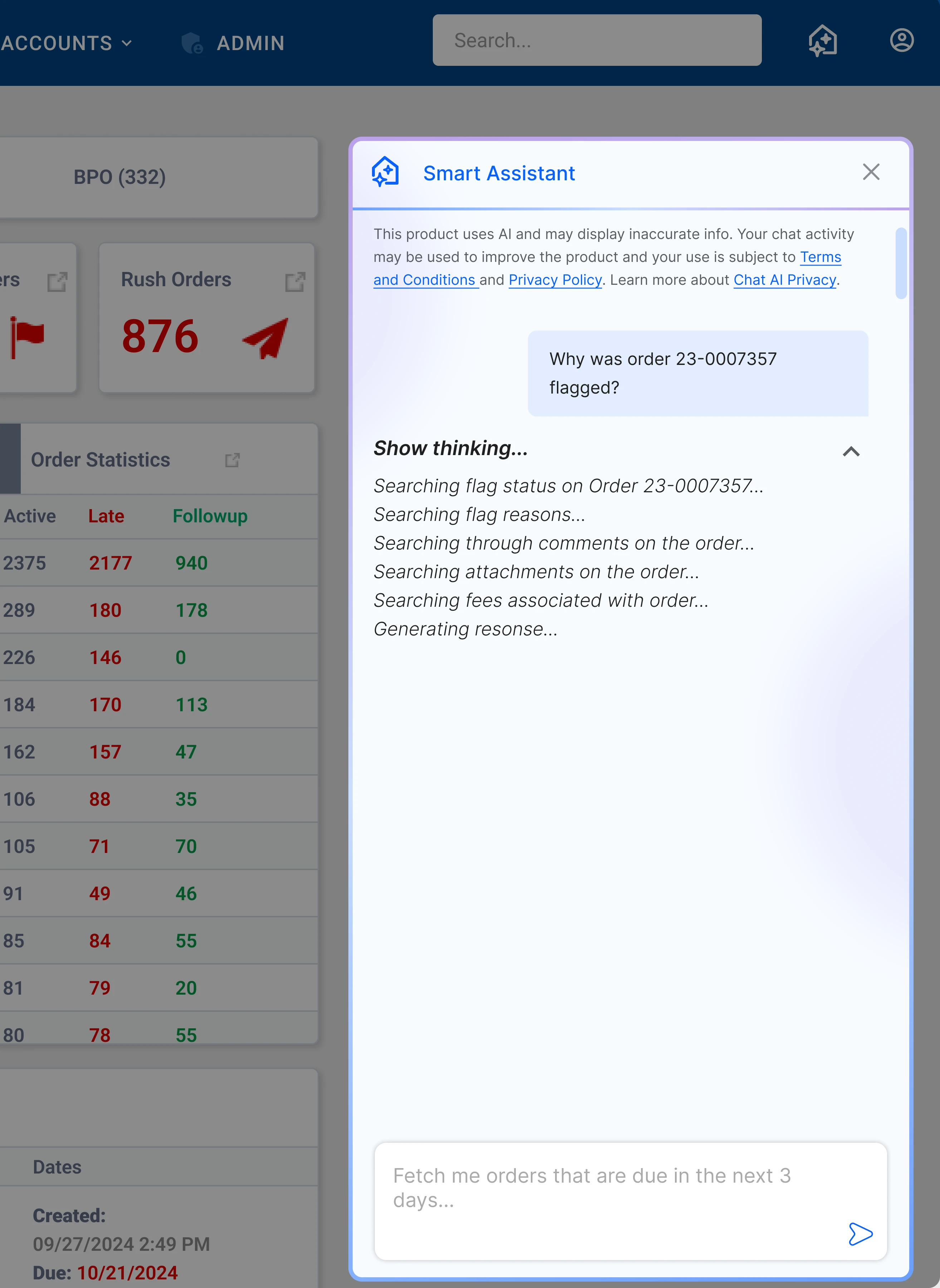

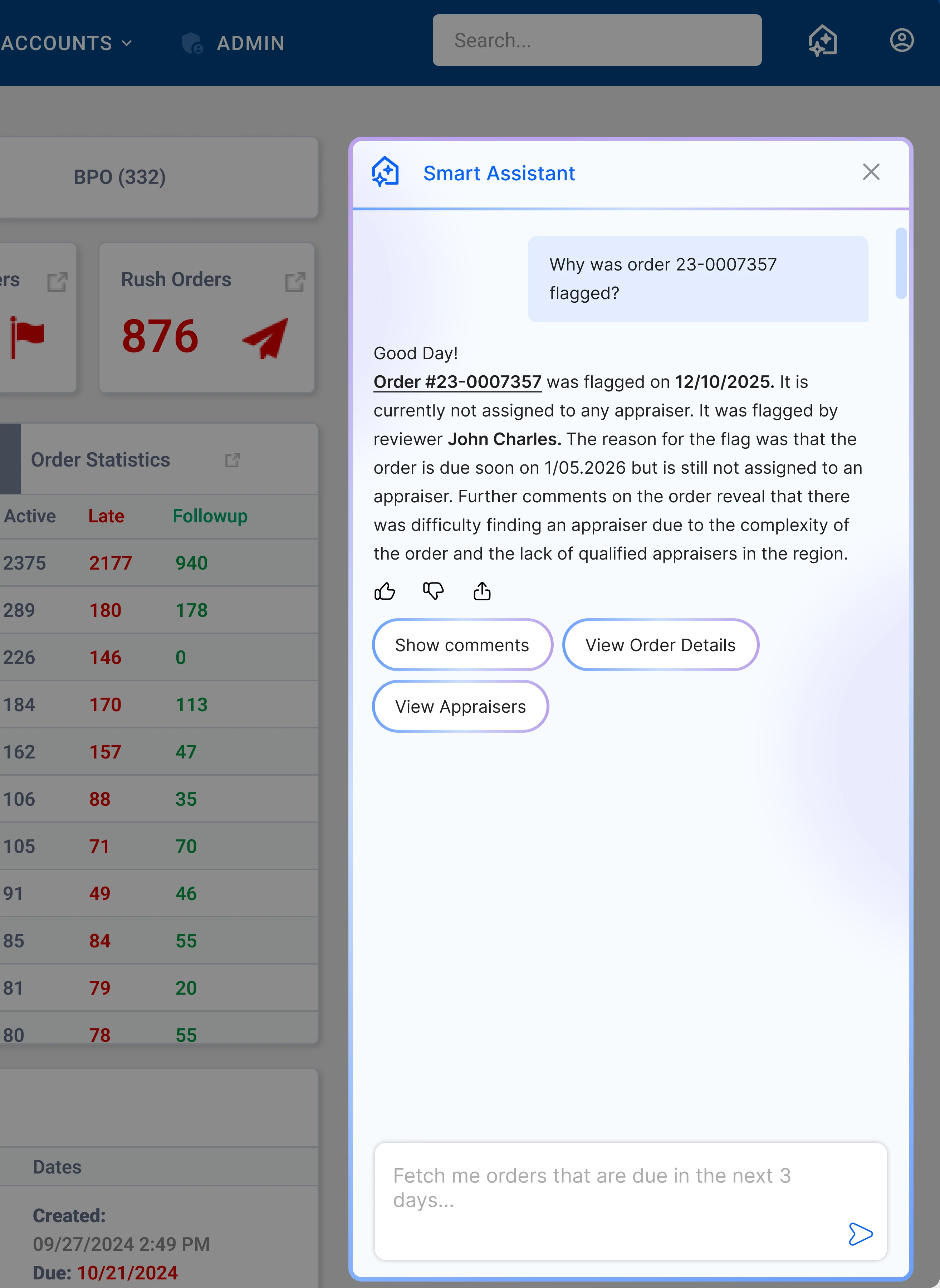

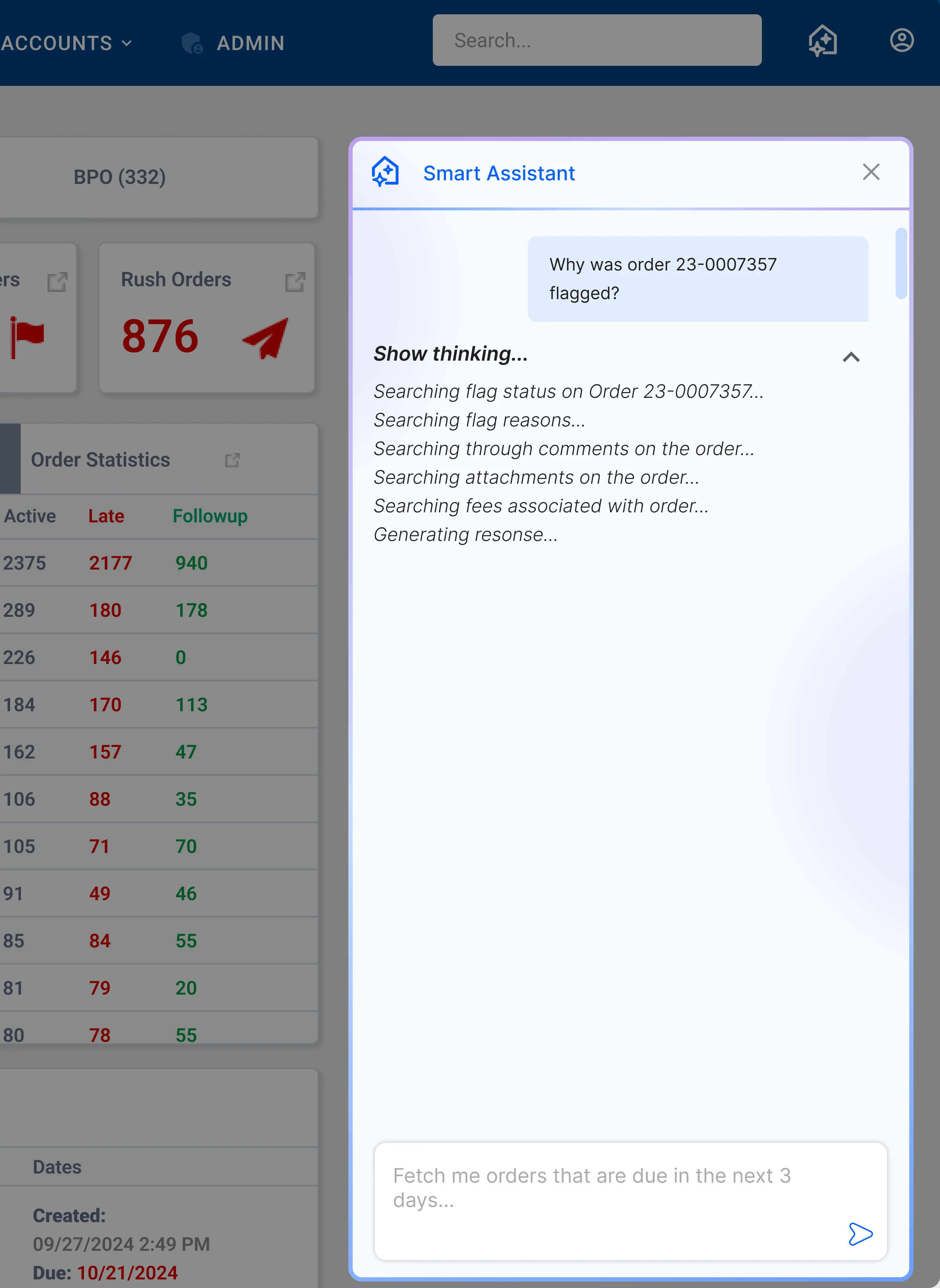

Shows the Agent's thought process to garner user trust

Example of chat with agent

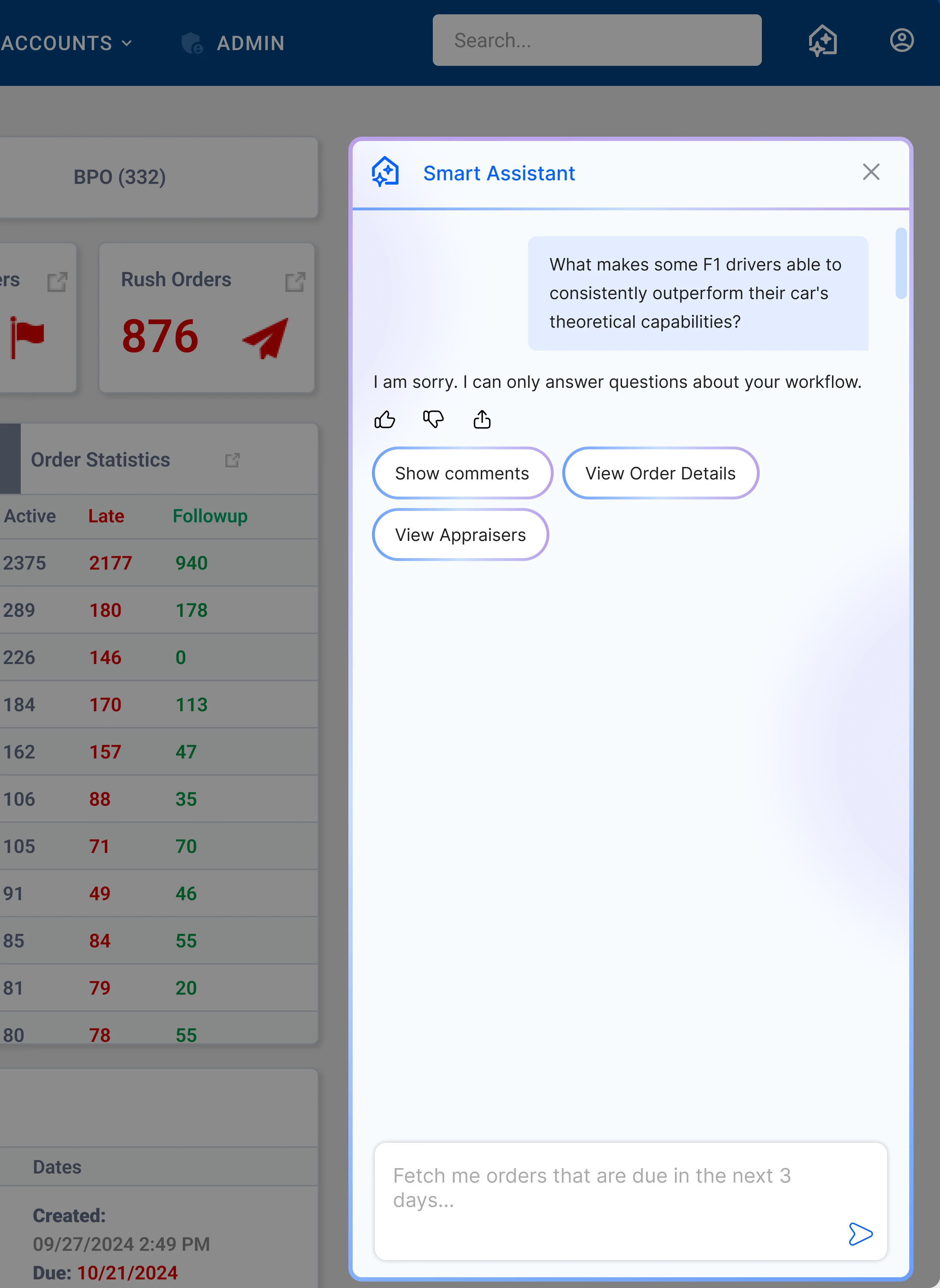

Example of user asking a question which is out of the agent's scope

How this Conversational AI agent solves user problems

Pain: Constantly switching between tabs

The assistant gives a centralized overview of orders due soon, so lenders don't have to jump between screens.

Pain: Relying on customer support for updates

The assistant instantly surfaces order statuses and updates, reducing the need for back-and-forth communication.

Pain: Tracking follow-ups manually

The assistant highlights orders that require attention, so lenders can act without mentally tracking everything.

Pain: Reading comments order by order

The assistant aggregates unread comments across orders, so lenders can review updates in one place.

Pain: Losing track of overall workload

The assistant provides a quick, digestible summary of orders and workflow, so lenders always know where things stand.

Pain: Breaking workflow to find information

The agent is embedded throughout Direct, so lenders can access order information from anywhere without losing context.

Testing and Feedback

Putting it to the test

To ensure accuracy and usefulness in a high-stakes, B2B environment, the AI agent was initially rolled out to a small group of trusted clients rather than a full-scale launch. This allowed us to validate its performance in real workflows while minimizing risk. It was then gruadully rolled out to more clients after validation.

Testing

I closely monitored Microsoft Clarity, Hotjar, and Mixpanel to analyze user engagement with the agent. I also sat down with clients to see what they have tp say. This helped me improve responses.

Improving conversations and responses

I learned that when users wanted to know information about orders, they also wanted the agent to link the attached documents for that order in the chat, so that users don't have to look for it them themselves and can just download it through the agent. This is one example of improving agent responses to be more helpful, showing information that is desired by the user and not overwhelming them.

User Feedback

"We love using the chat that you guys have come out with. It makes order processing so much faster for us." -Valuelink Client.

Reflections

What I Learned

Think in systems

This required me to think in systems. The real complexity was in structuring modular services for user intent, order retrieval, and action execution (like performing actions within specific orders).

What I want to improve on

I want to give the agent the ability to answer questions about the constantly changing government regulations around appraisals and what it means for the user.

Results

Product Impact

Improvement in Order Completion

Drove a 35% reduction in order delays as noted by Mixpanel.

Decrease in Dependency

Decreased user dependency on Customer Success by 45%, freeing up Customer Success for other tasks and giving users more agency.

Faster Order Completion time

Mixpanel tracking revealed that orders are being completed 30% faster as dependecy on Customer Support decreases.